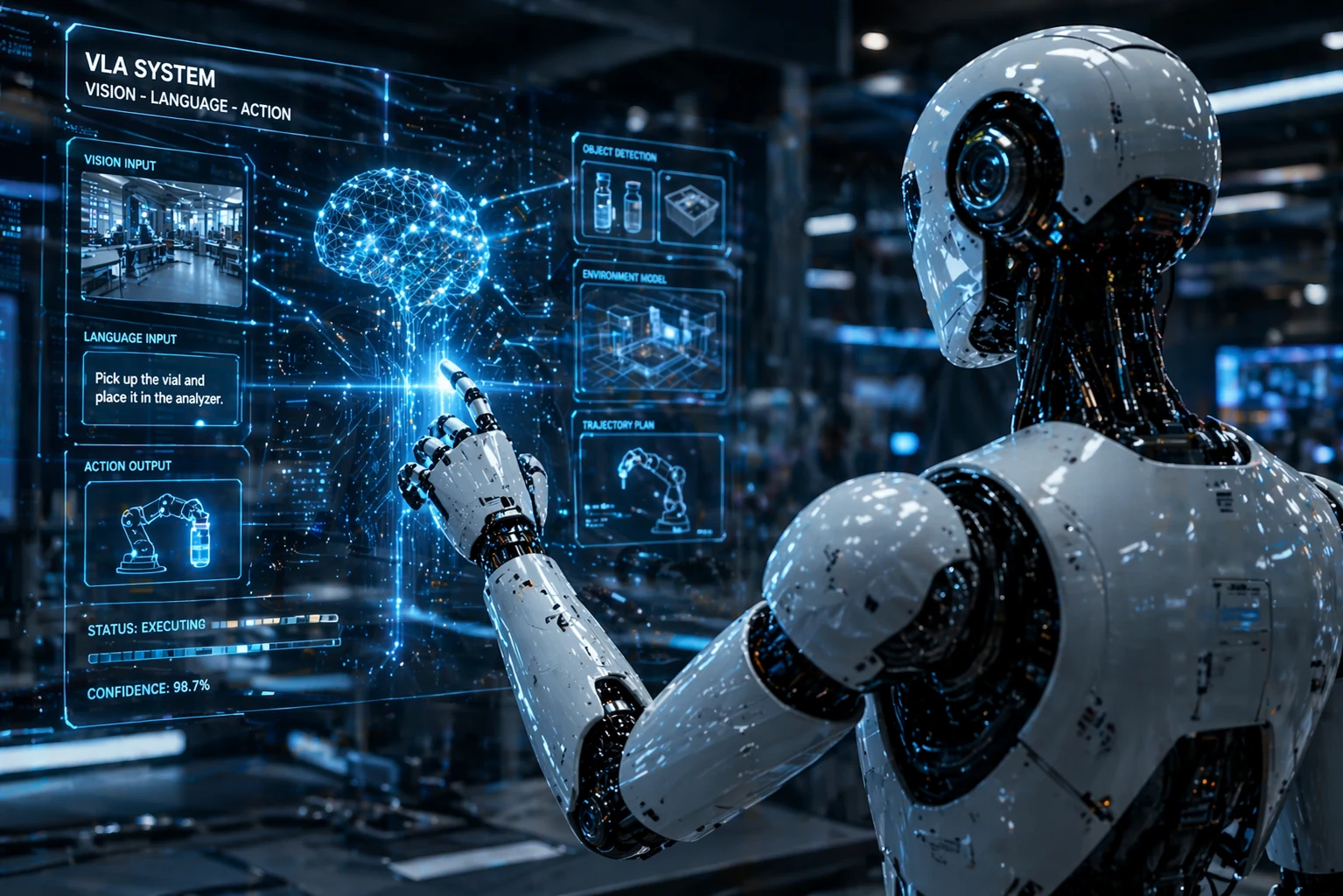

Robotics has entered a new phase. Instead of relying only on narrow, pre-programmed motions, newer systems are starting to combine vision, language, planning, and action into one smoother pipeline. That means a robot can look at a scene, understand a spoken request, and respond with behavior that feels far more flexible than old-school automation.

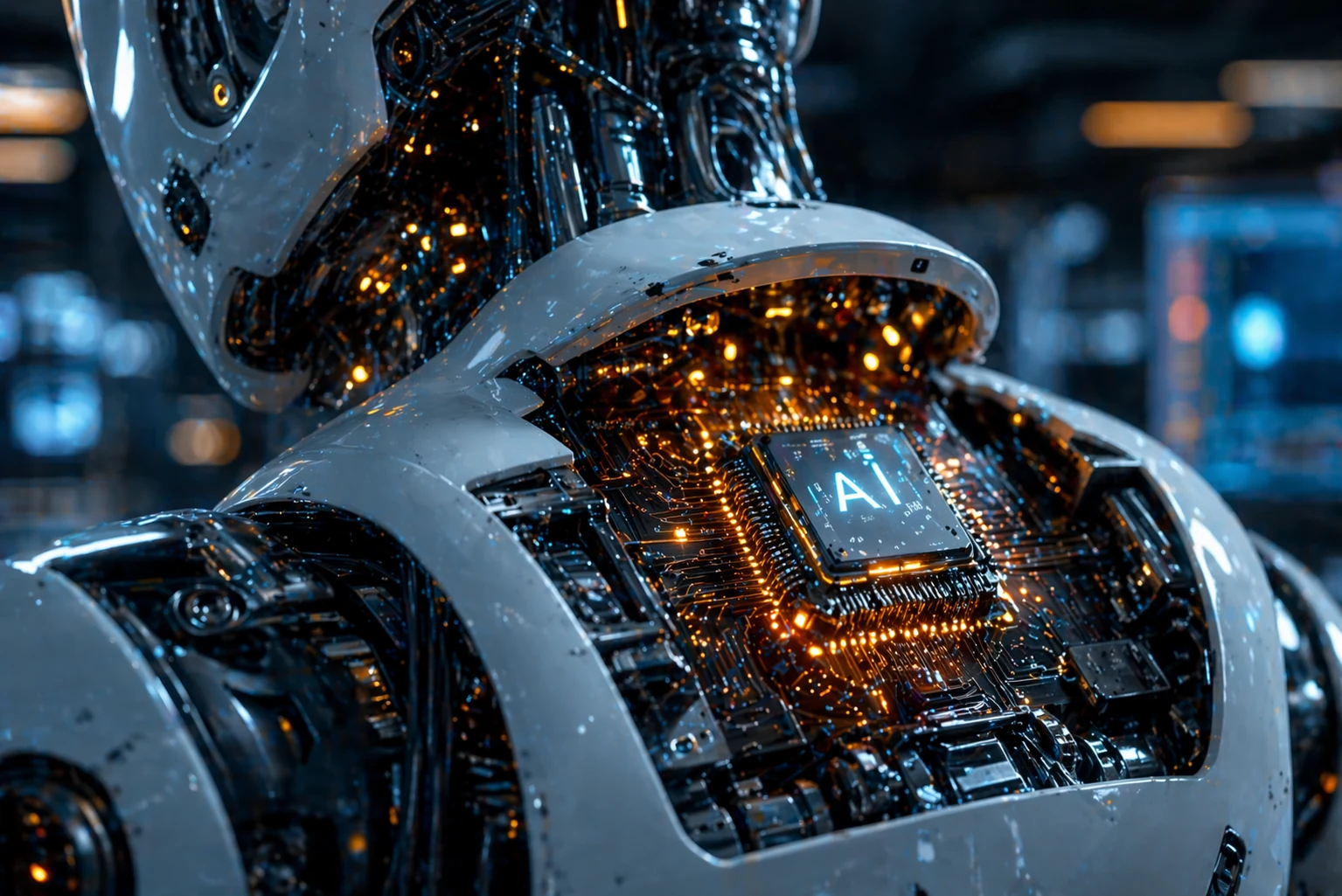

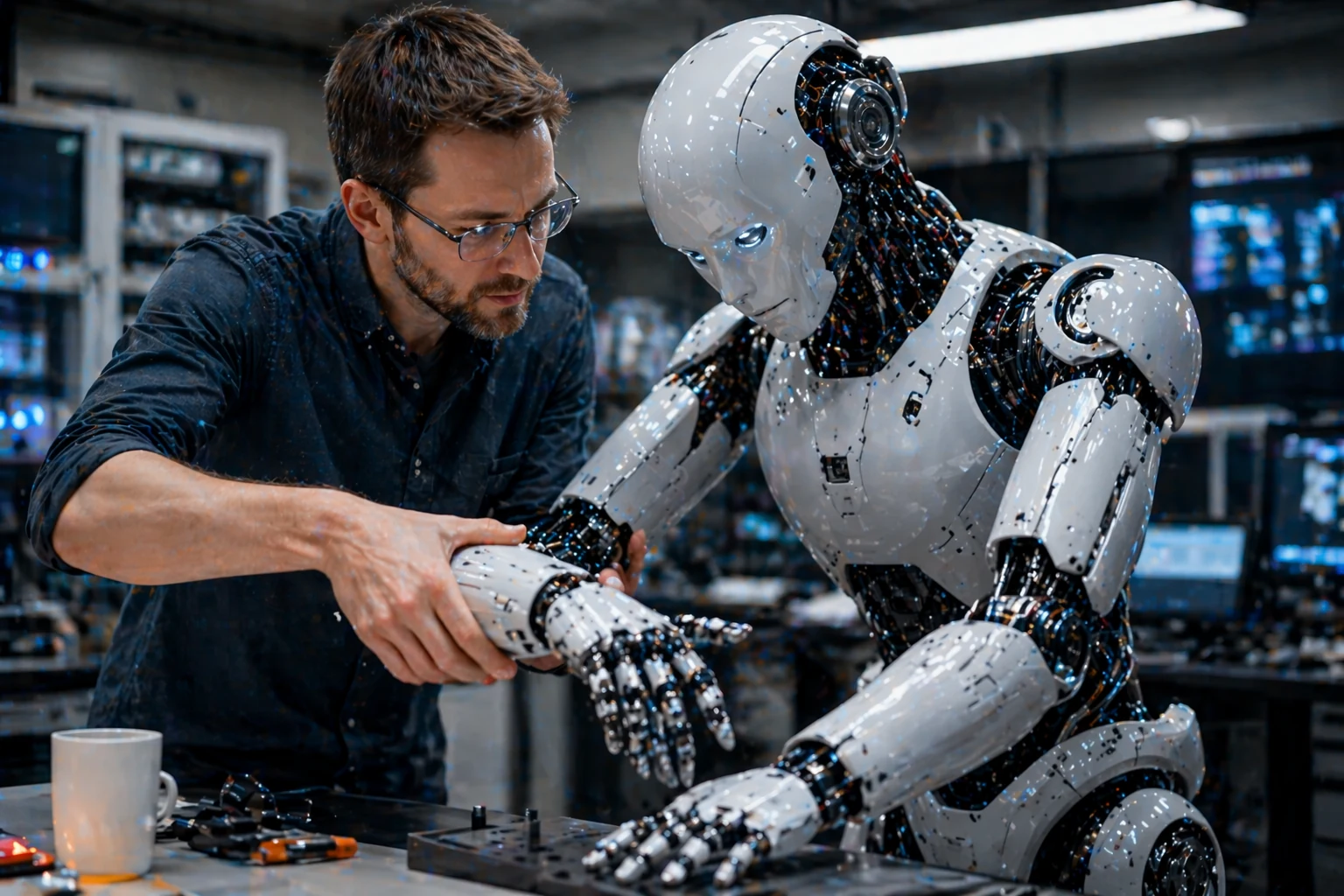

Edge AI is also changing the game by moving more intelligence onto the machine itself. That cuts cloud delay, improves responsiveness, and makes robots more useful in fast-moving real environments. On top of that, better teaching methods now let people demonstrate tasks directly instead of writing everything from scratch.

Breakthrough Demonstrations

These demos show where the field gets interesting: shared intelligence, agile movement, and machine behavior that looks far more fluid than what most people still picture when they think of robots.

Smarter Decisions at the Edge

When more processing happens locally, robots can respond faster and depend less on a remote connection. That matters in factories, hospitals, warehouses, and homes where a half-second delay can be the difference between smooth action and clumsy failure.

Edge autonomy also helps privacy, reliability, and safety. A robot that can reason on-device is more resilient when connectivity drops or when quick judgment matters most.

Teamwork, Coordination, and Scale

One robot doing one task is old news. The real jump happens when multiple robots can work together without getting in each other’s way. Shared models, fleet coordination, and better planning software are making that possible.

That is where robotics starts feeling less like a single machine story and more like a systems story. The more coordinated the fleet, the more practical the deployment becomes.

Why It Matters

You are not just looking at shinier robots. You are looking at a shift toward machines that can generalize more effectively, react faster, and be taught in ways that are easier for real people to use.

- Generalization: learn once, apply skills more broadly.

- Edge autonomy: reduce latency and dependence on the cloud.

- Teamwork: coordinate more robots with less wasted motion.

- Accessibility: natural language and demonstration lower the barrier to teaching machines.

- Real deployment: adaptive robotics is moving from labs into actual work environments.

🤖 Experience AI Robotics Yourself

The systems you are reading about are still advancing fast, but consumer robots already give you a taste of how interaction, personality, and autonomy are evolving.

Share This Page

Use the buttons below to send this page out fast, or copy the link directly.

In Summary

AI and robotics are moving toward systems that can see better, reason faster, learn more naturally, and cooperate at larger scale. That is why this wave feels different. It is not just about stronger hardware. It is about machines becoming more capable across the whole chain from perception to action.

Trending next

When you finish this story, these are the best next taps on the mobile site.